Short introduction

Lidar sensors offer great promises for autonomous driving – not only they offer a complementary sensing technology for better perception of the driving scene, but they also overcome some of the pitfalls of the classical RGB/IR cameras: inability to provide perception in challenging conditions of limited visibility like fog or heavy rain, inability to look through obstacles, reduced quality of output in a high dynamic ranges (e.g., transition from tunnels to bright daylight), reduced range due to fixed lens and reduced resolution. Broader adoption of gyroscopic lidars was hampered by increased price and size but also specific mounting on the top of the vehicles. Solid-state lidars (Innoviz is one of the leaders in the domain) provide a new wave of optimism that technology could provide a safer automotive future at a reduced cost.

Figure 1 Innoviz ONE Lidar

Innoviz ОNE Lidar produces a highly dense 3D point-cloud (~200k points with a rough coverage of 250 m distance with HFOV/VFOV 115/25ᵒ angles) that enables to perform a scene segmentation (road, pavement, signs, vehicles) with an object detection, classification (car, truck, bus, motorcycle, and pedestrian), and tracking. Below we will describe RTRK efforts to provide a real-time performance of the algorithm pipeline on the Renesas V3H embedded platform.

Figure 2 Innoviz Lidar in action - scene segmentation, object detection, classification, and tracking

Target Renesas V3H evaluation platform and SoC overview

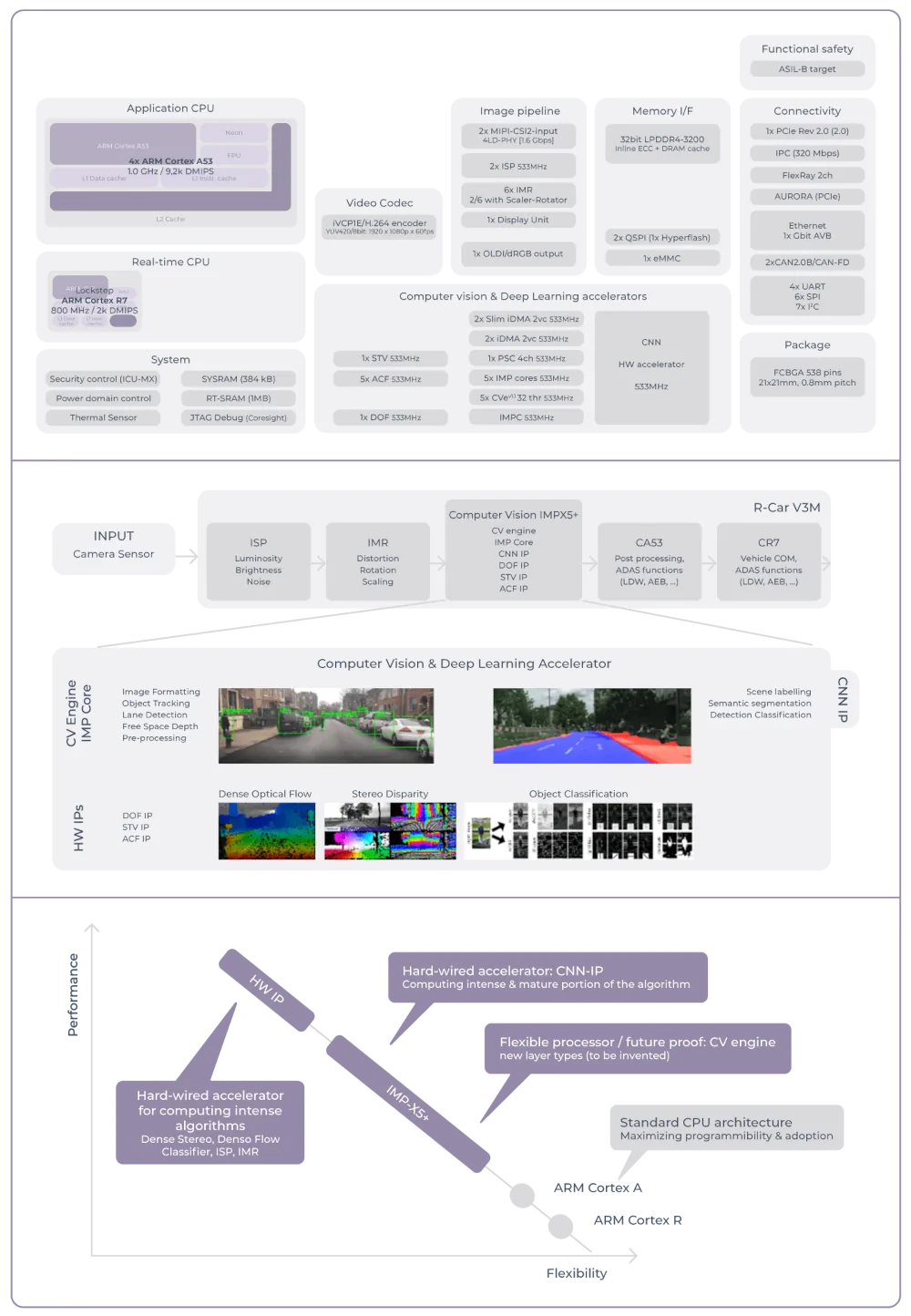

Renesas provides different products aiming diverse segments of the autonomous driving (urban, highway, motorway, etc.)1 . For the Innoviz product line, a powerful V3H SoC was selected (best-in-class TOPS/Watt with the ASIL B HW level of functional safety). Overview of the available diverse HW accelerators, their pipelining in a typical input processing, and differences amongst accelerators could be seen in the following images.

Figure 3 Renesas V3H accelerators - block diagram, pipelining, differences and target use

For the purpose of the Innoviz Lidar optimizations a general-purpose ARM Cortex-A57 and parts of IMP-X5+ computer vision and deep-learning sub-system (CNN IP and CV engines) were used.

ARM Cortex-A57 core:

- Floating-point SIMD instructions

- Good support for software cache prefetching

5x Computer Vision Engine cores:

- Multi-Thread Multi-Data architecture

- 4 cores per CV engine

- 8 HW-threads per core (independent or master-slave configuration)

- Floating-point regular and integer SIMD instructions

- Dedicated Local Working Memory (LWM) per core and Global Working Memory (GWM) per CVe

- DMA controllers: for LWM/GWM (TGDMAc), and for scratchpad-DDR (iDMAc) memory transfers

1x CNN-IP:

- Hardwired accelerator optimized for convolutions, ReLu, pooling, and maxout layers

- Highly efficient 5x5 convolutions (3rd generation supports 1x1 and 3x3 convolutions)

- CNN Toolchain support for Caffe and ONNX model conversion

Project overview

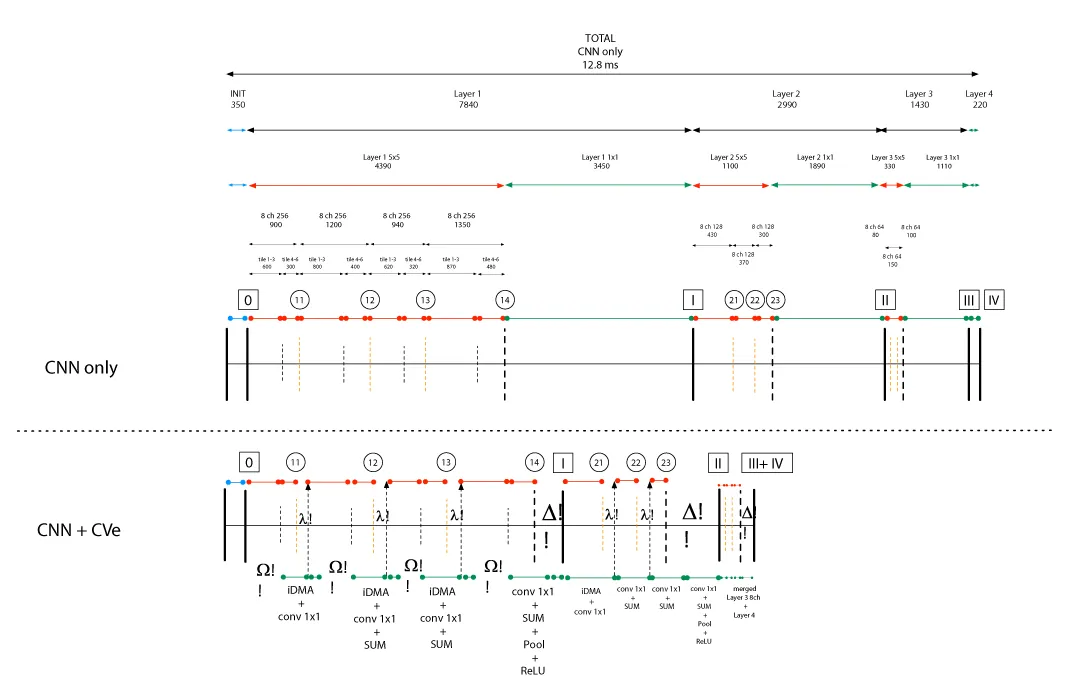

The starting point of the project was a convolution neural network model that has been converted by the Renesas CNN Toolchain from Caffe to an executable C code running on ARM and orchestrating execution of the command lists on CNN IP. The CNN model initially had a runtime 12.8 ms and the idea was to perform an ambitious last mile optimization in order to fit the target KPI of 6 ms. The CNN model used an architecture similar to the one at the following image (due to NDA the exact details are not shown). Yellow blocks represent the convolution layers with the 5×5 kernel, violet blocks represent concatenation layers (merging memory outputs of different convolutions), while the grey blocks represent the convolution layers with a kernel 1×1. The entire model was composed of 4 similar blocks (4 convolutions 5×5, several concatenations in the middle, with a terminating convolution 1×1), executed sequentially to produce the final output. Model takes a 16 channel 256×256 input and produces a 56 channel 64×64 pen-ultimate intermediate result that is finally scaled to an 11-channel output feature of 64×64 dimensions.

Figure 4 Innoviz perception CNN model – a snippet of a network section

The optimization challenges that were solved include the following aspects:

- Convolution 1x1: due to inability of CNN IP gen. 2 to efficiently calculate convolution 1x1 layers (contrary to 5x5 layers) the goal was to efficiently implement and optimize them on CV engine.

- Parallelization of CNN IP and CVe: CNN-only implementation executes layers sequentially – due to offloading of convolutions 1x1 to CVe, idea was to enable time savings while respecting the memory dependencies in order to optimize the entire pipeline.

- Concatenation and double buffering: CNN model processing has memory dependencies and a strict execution according to a high representation graph – to improve the runtime, an efficient strategy for double buffering was devised that exploited identified independence between separate parts of the layers while using the faster but limited (2 MB) of scratchpad memory.

- Tilling and tunneling: each convolution layer is split in several spatial and channel tiles in order to fit available resources – on the other hand an overlap between tiles has to be carefully taken care of due to calculation dependencies. Furthermore, in-place memory processing is enabled by logically grouping and sequentially calculating corresponding tiles of several consecutive layers – a so called tunneling strategy jointly considered execution on both CNN IP and CVe.

Convolution 1x1 optimizations

Initial step was implementing a baseline straightforward ARM implementation (96 ms) that served as a basis for an initial ported CVe implementation (33 ms), whereas the final CVe version reach a sub-ms time (0.92 ms). Overview of times and acceleration factors can be observed in the table below.| Layer size (pixels) (in->out channels) | Original @ CNN | Reference @ ARM | Final @ 5CVe | Acceleration to CNN / ARM |

|---|---|---|---|---|

| 256x256 32->24 | 3.45 ms | 96 ms | 0.92 ms | 3.75x / 100x |

| 128x128 48->32 | 1.89 ms | 52 ms | 0.46 ms | 4.1x / 113 x |

| 64x64 56->56 | 1.1 ms | 24 ms | 0.22 ms | 5x / 109x |

| 64x64 56->11 | 0.22 ms | 6 ms | 0.05 ms | 4.4x / 120 x |

Optimization of the convolution 1x1 layer were conducted in several iterative steps:

- Baseline implementation: direct translation of the ARM reference implementation to confirm bit-exactness and to establish a reference time.

- Divide workload to 5 x CVes + 0$-cache: parallelization of the computation – inputs are divided into equal parts and load separated on 5 accelerators, and then between 4 cores, and then 8 HW threads. Furthermore, data access was engineered to minimize the 0$-cache misses, contributing to an overall reduction of the execution time.

- Global memory pipeline optimization: baseline implementation worked with the input, intermediate, and output data stored in DDR. At current stage, we have engineered a strategy to transfer stored data from DDR to the scratchpad memory by the use of iDMA controller. Double buffering approach allowed to further reduce the execution time by masking the transfers with useful calculations. Due to limited size of the scratchpad memory (2 MB) and a large inputs, a careful design of the buffer sizes was done at the current stage.

- Local memory pipeline optimization: an additional memory intervention was orchestrated at the level of Local Working Memory (16 KB per core). Thread Group DMA controller has been configured to execute a double buffering scheme for transfers between the scratchpad and the LWM. Coordination between iDMA and TGDMA controllers assures maximum efficiency and further reduction in processing time.

- SIMD instructions: usage of a specialized GMAD (Group Multiply and Add) instruction allowed a several fold reduction in sheer processing due to high efficiency when working with a 16 bit – 4 + 4 vectors.

- Assembly: a last mile optimization step included assembly low level interventions like stall removals, instruction reordering, loop unrolling, multiplication with reciprocal values instead of divisions, etc.

Parallelization of CNN IP and CVe

Below image illustrates a high-level overview of the engineered scheme that allowed to execute CNNIP and CV engine in parallel. The aforementioned scheme was applied to the last layer in a previous block (convolution 1x1) and the first layer in a next block (convolution 5x5). By processing inputs in smaller parts we could achieve masking of the DMA transfer delay and minimize the overall execution time.Concatenation and double buffering

The used CNN model included the concatenations (merging and combining the outputs of different layers as an input for some of consecutive layers). Even though concatenations are instrumental to achieving the perception goals of the used CNN model, they create memory dependencies and hamper the parallelization and double-buffering efforts. To resolve the sensitive dependencies while operating in a limited memory space (2 MB of scratchpad memory), a precise orchestration scheme was devised to operate the iDMA memory transfers.

Tilling and tunneling

Processing of large inputs in any layer of the used CNN model cannot be done in a single run due to the memory limitations. Thus, each layer is split into several channel and spatial tiles. In turn, having multiple spatial tiles imposes the need to take care of the redundant tile-overlap information in order to allow independent single tile calculations. Tile-overlaps can increase depending on the used striding values within the used convolution layers and should be taken care when optimizing the pipeline. Once the tiling is done a further optimization can be provided by the tunneling mechanism. Without the tunneling, all spatial tiles of a single layer are processed before proceeding to the tiles of a next layer.

Tunneling strategy basically rearranges the order how the tiles are processed: a single spatial tile is fixed and then processed in-memory over a chain of several convolution layers. Once a single spatial tile chain is complete, we proceed to the next tile, until eventually completing the entire input image. Benefits of the basic tunneling can be further increased by horizontally grouping several tiles into a larger bar and then processing it in-memory through the tunnel. Bar tunneling was implemented for the chains of 5 layers long in all of the 4 blocks of the CNN model and provided around 15% improvement in the overall speed.

Final results

After all applied optimizations the overall CNN model was running at 5.8 ms, which is around 2.2-fold improvement of the already efficient execution on the CNN IP only.

Conclusion and possible improvement

Afterall, we have shown that the optimization of the CNN model was possible and that it can fit the real-time KPI expectations. As demonstrated, there are visible benefits of jointly using the powers of CNN IP and CVe. The major drawback of any tailored optimization including a custom CNN model is how to generalize it, parametrize it, and automate it. Majority of the presented ideas from the Innoviz Lidar project have been afterwards translated to the functioning of the Renesas V3H gen. 3 SoC (efficient 1x1 and 3x3 convolutions), but also automated by the usage of Renesas CNN Toolchain (double buffering, parallelization of CNN IP and CVe, tiling, tunneling).