Short introduction

Camera mirror replacement system (CMRS), which are now replacing as many as six mirrors on trucks, may require two cameras on the driver’s side and two cameras on the passenger side, rendering the video streams to create a very wide view. The main idea behind this algorithm is to detect a car approaching from behind and alert the driver in case of potential collision. The algorithm is created by DENSO for PCs. Here we will focus on porting and optimization of one of such algorithms for the embedded target platform.

Target platform and SoC overview

As the algorithm is initially created for the PCs, the main goal is to do the porting and optimization for the ALPHA embedded platform. The ALPHA board consists of three interconnected TDA2x SoCs. One of those will be used as a target SoC in the process of porting and optimization. The platform is depicted in Figure 1.

Figure 1. – ALPHA board

The selected TDA2x SoC (colored in red) has the following resources.

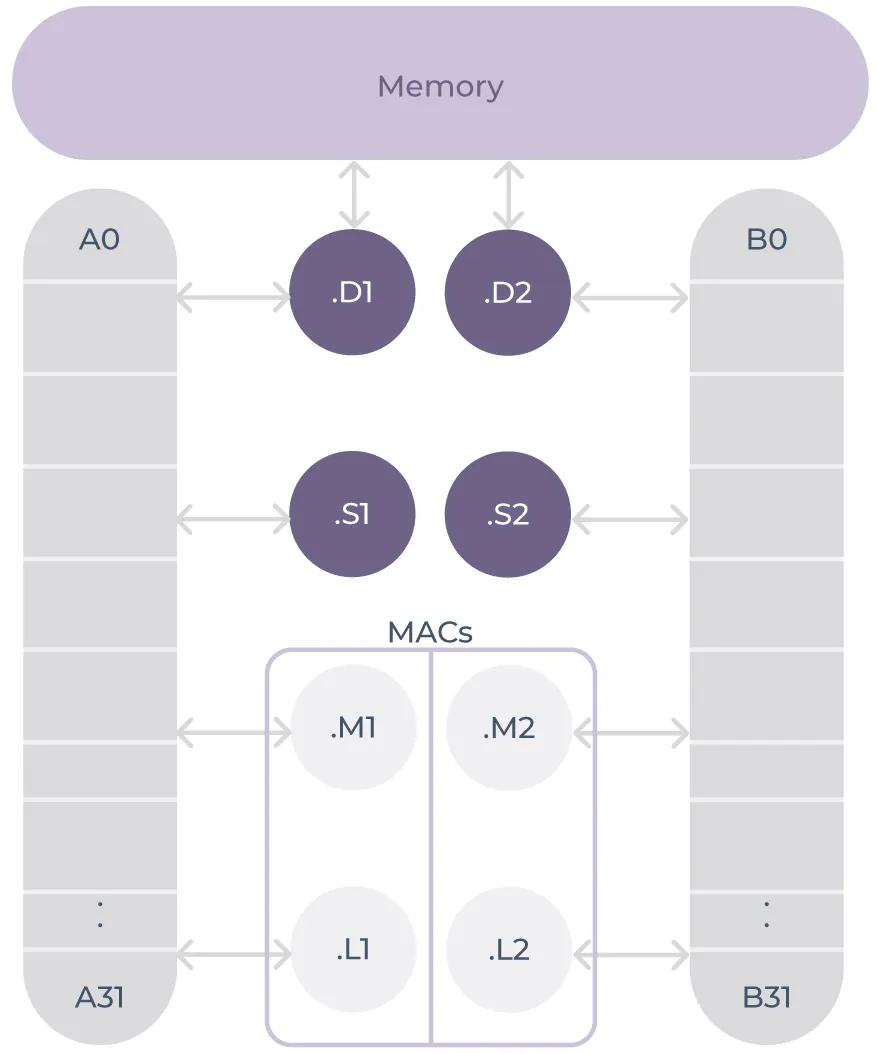

2xDSP C66x cores:

- SIMD Floating point

- Up to eight instructions executed per cycle

2x ARM Cortex-A15 cores:

- SIMD Floating point

- Good support for software cache prefetching

4x Embedded Vision Engine cores:

- Integer SIMD instructions

- 16 16×16 multipliers

- 768 bits/cycle memory access

- Enhanced DMA

2x ARM Cortex-M4 2x cores:

- dedicated to be a controlling processors, not data processing units

Project overview

The starting point of the acquired algorithm was a single frame car detection PC application able to process a single frame at ~40ms. It was able to do car detection and car tracking working at ~25FPS in release mode with more demanding PC configuration. The algorithm itself could be split into four parts:

- Input frame processing and conversion from YUV422 to grayscale image

- Histogram of oriented gradients integral image computation

- Cascade classifier of predefined rectangle regions

- Kalman filter and YUV422 byte reordering

Initial step was porting of this single frame application from the PC to the target SoC. This was done in four, not so straight-forward, steps:

- Removal of unused code

- Creation of adequate VisionSDK algorithm plugins and use case

- Implementation of stdio capabilities for VisionSDK on Alpha board in order to read detection configuration

- Verification of detection results

Several main challenges in this stage of the project were to remove polymorphism in the code base as it would be hard to find the bottlenecks and do the optimization afterwards and to remove dependency on the textual configuration files as reading from the SD card was not possible on each SoC of the platform.

The first iteration yielded a ported version to the A15 core only. For the sake of simplicity and easier comparison of the results between the PC version (which is used as a ground-through reference) and ported and, later on, optimized version – the ethernet connection is used instead of cameras. Even though the hardware usage was modest, the algorithm porting was successful. Anyhow, using only A15 for both network transfer and all stages of the algorithm – the bottleneck was obvious.

For code optimization the target was processing only one image 1280×800 @ 30FPS. Several values to monitor have been defined to verify performance of the code (Boundary Boxes, Confidence, Distance and Speed) with the PC version as a ground-truth reference.

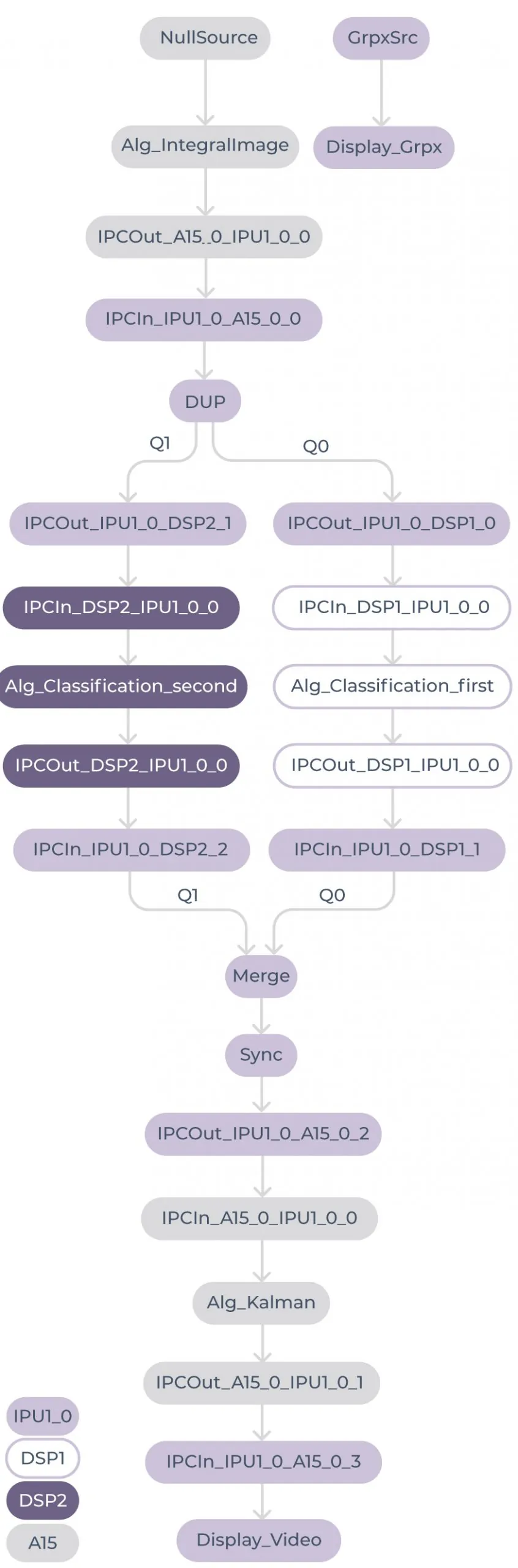

The first iteration of the optimization was to offload the A15 core with classification part of the algorithm. Both DSPs were used and the use case in the end looked like on Figure 2 (Use case after the first iteration of optimization).

- High demand on A15 due to network transfer

- Poor classification performance of DSPs

- Slow memory accesses

- High performance penalty for each floating point division

- Almost no parallelization on instructions level

- Low demand of Kalman filter plugin

- Kalman filter calculations – a few milliseconds

- Conversion of YUV422 bytes order – a bit more

- Good integral image algorithm plugin on A15

- Full NEON vectorization

- Running at ~54ms

- Hardware still not utilized as it should be

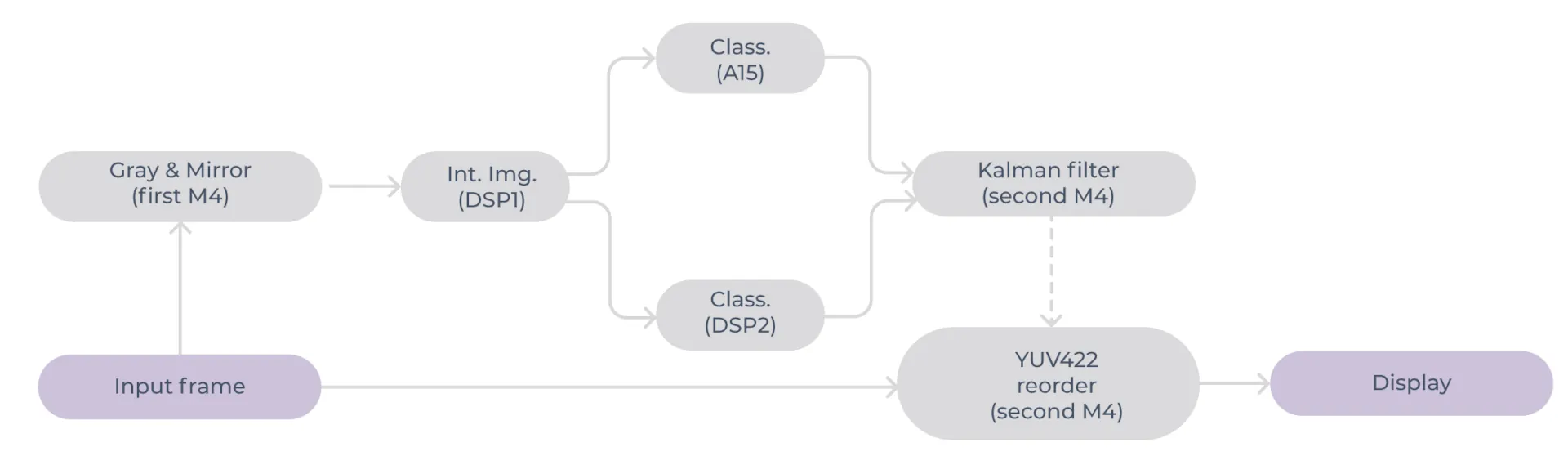

To address the problems the approach was to:

- Reduce unnecessary load on A15 core

- Transferring whole video to board and then streaming it from memory (this is realistic scenario cause real data would come from cameras)

- Rearrange the algorithm plugins

- Splitting classification on A15 and one DSP

- The majority of hypotheses processed on A15

- Second DSP used to reduce load on A15

- Calculation time ~105ms

- Integral image moved to first DSP

- Initially poor performance, calculation took around ~184ms

- Kalman filter and YUV422 bytes reordering moved to M4

- Calculation time ~54ms

- Splitting classification on A15 and one DSP

By doing that we have got to the second iteration of the decomposition and therefore a better hardware utilization depicted on Figure 3. This was a starting point for the optimizations. For the classification part of the algorithm, the general optimization was to focus on the following:

- As hypotheses are generated at startup and classification of one ROI doesn’t affect the other, we can split hypotheses and process them in parallel!

- Tabulating repetitive calculations

- Analysis and removal of unnecessary calculations

- Branching removal

- Switching from division to multiplication with reciprocal value where possible

- Usage of intrinsics

After an analysis, we identified stages 1, 2 and 5 as the most critical stages in the classification. To address those, we precalculated the lookup tables. For stage 1, we figured out that for each ROI we can pre-calculate and store 4 pointer offsets to integral image and reciprocal value of area because everything is known at the time when ROIs are generated, which is in the initialization phase before any processing. For stage 2 we used a similar technique to store scaled rectangles for each sub stage of stage 2. Using the same trick as for stage 1 is not a good approach since we have 3 sub stages which would result in 3x bigger lookup table and that would be stressful on memory.

Figure 3. – Second iteration of optimization

For the A15 core specific optimization techniques we focused on:

- Usage of vectorized SIMD instructions (ARM NEON)

- Operating on 128 bit registers (e.g. 4 single precision floating point numbers, 16 unsigned bytes etc.)

- Using ARM NEON

- Using lookup tables helped but effect wasn’t as we expected due to newly induced cache misses

- Exploiting cache prefetch instruction in combination with lookup table – use lookup table to prefetch data that will be needed shortly

For the DSP specific optimization techniques we focused on:

- Replacing division with fast reciprocal value intrinsic + multiplication

- To achieve the same precision 2 additional iteration of Newton-Raphson method were applied

- Using DSP specific SIMD intrinsics

- Unraveling data dependencies

Regarding the another CPU-heavy part of the algorithm – integral image – we have used the following techniques:

- Heavy usage of DSP intrinsics

- Preventing cache misses by removing lookup table for magnitude and bins

- If dx is positive simple comparison between dx and absolute value of dy will tell us bin value

- If dx is negative we can again compare absolute value of dx and absolute value of dy and get bin value

- To remove branching we observed that we can calculate the bin value by comparing abs(dx) and abs(dy) and then doing the following correction if dx is negative:

- Use abs(dx+1) instead of abs(dx)

- XOR bin value with 3 (1 ^ 3 = 2, 0 ^ 3 = 3, this follows symmetry of distribution of bins)

- Replacing expensive floating point calculation with integer multiplication and shifting

Final results

After this time-boxed optimization, we have got the algorithm on the target platform that is processing video at ~12 FPS. Average times for each part:

- Gray & Mirror ~75ms

- Integral image of HoG ~72ms

- Classifier A15 ~60ms

- Classifier DSP ~65ms

- Kalman filter ~4ms

- YUV 422 reorder ~50ms

Conclusion and possible improvement

Afterall, we have shown that the porting and optimization of the PC algorithm is possible. Of course there is always room for improvement. Here is the list for possible improvements. If we had more time we would definitely love to see the results of the following:

- Use non-blocking DMA transfer to SRAM memory

- Overlapping memory transfer and processing

- Overhead for first block only

- Reconfiguring DSP memory

- 128KB L2 data cache

- 128KB SRAM -> utilize non-blocking DMA!

- Move algorithm some plugins to EVE (free DSP in process)

- Great performance expected for integral image and YUV 422 reorder

- Use both DSPs and A15 for classification