Introduction

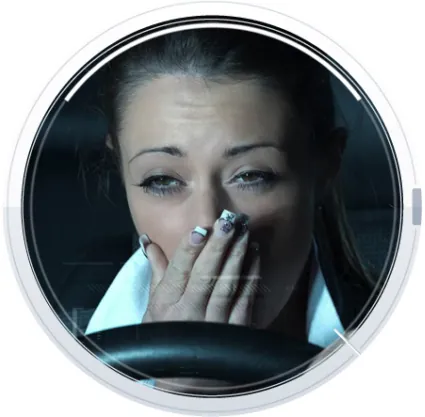

Most common failure point in vehicle-related accidents are drivers. There are various causes can distracts a driver from looking at the road in front of the car, all leading to possible accident.

Drowsiness can be detected by the rate at which points surrounding the eyes move up and down or the orientation of the head; either up, right, or tilted.

The challenge

The customer FotoNation approached us with an implementation of their DMS algorithm on an embedded platform Texas Instruments TDA3x. They were already performed certain optimization of the code, but were not satisfied with real time performance. The goal was to have algorithm working at 30 frames per second, thus, one video frame should be processed in less than 33ms and the client further extended requirement for processing of one video frame in less than 30ms. As the initial optimized algorithm port required ~ 88ms to process one frame, the task was to optimize (already optimized) code by 3x.

The optimization process

The optimization process included several steps that were performed until the goal has been reached. The first step is code profiling. After that, most critical parts have been analysed. Beside code analysis, most important step was an algorithm analysis. The C compiler that was used (Texas Instruments C66 compiler) is very good in generated fast code. The compiler is capable of detecting possibilities to reorder the code execution in a way that independent processing steps are done in parallel. The C66 DSP has two data pats, each with four functional units. Additionally, it can pack up to eight operations to be executed in one cycle (containing ALU, MAC, load and store operations). In some cases compiler failed to do that, although parallelisation was possible, due to unknown dependency of processing steps. In order to determine such dependencies one should analyse the algorithm and reorganise/modify the code in such a way that enables compiler to generate the code that will allow optimal execution on C66 DSP. This process also includes analysis of compiler generated assembly code with a goal to understand the performance bottlenecks.

In some cases, it was sufficient to change the way the algorithm is implemented on the target DSP architecture. However, in some cases there was a need to modify the algorithm itself. In that case, the first step is a proposal for algorithm modifications. If the proposed algorithm modification result in speed improvement, the modification has been explained to customer. Then, expert team has done the evaluation of performance (quality) of modified algorithm from the customer using extended set of tests. If the quality is not impaired, algorithm modification have been approved.

The complete process of code profiling, code and algorithm analysis, code and algorithm modification, evaluation of performance in terms of speed and quality of detection (is DMS still doing what is supposed to do) has been repeated until the optimization goal has been reached.

Examples of performed optimizations

Square root optimization

With careful inspection of generated assembly file we found that calculation of integer square root and the inverse calculation that follows were the most expensive code sections in one function. Replacing that with reciprocal square root calculation resulted in 10x improvement of the observed code section.

Reciprocal square root is calculated using _rsqrsp() C66x intrinsic which gives 8 bits precise result. In order to get more bits of precision, we did two iterations of Newthon-Raphson method. Change introduced difference in quality and that difference was approved by FotoNation.

Specialisation of function with a variable loop iteration

There were several functions containing a loop which number of iteration depends on the place the function is called. After the function is specialised – made a special version for an each use case – the number of iteration of demanding loop was fixed. This is an important optimization, since C66x compiler can schedule instruction in a more efficient way when the precise number of iterations is known.

Simplification of inner loop in complex loop

For complex loops, such as nested loops or/and conditional branches inside loops the effectiveness of the compiler may be compromised. When the situation becomes too complex the compiler might not be able to pipeline at all. In order to perform software pipelining for inner loop, here was necessary to simplify condition inside the loop. Just for sake of completeness, software pipelining is the most important property for fast implementation of any loop on C66x.

The compiler will if-convert small “if” statements (“if” statements with “if” and “else” blocks that are short or empty). The compiler does not if-convert large “if” statements (“if” statements where the “if” or “else” block is long). The compiler cannot software-pipeline loops with “if” statements which are not if-converted or eliminated in some other way (by programmer).

In one specific function “if” and “else” blocks had local variables and calculations which result in writing into a particular memory location. All expressions, which do not depend on conditional, from if-else block, were moved the block in order to perform if-convert of large “if” statements and simplify code for the compiler as much as possible. After this simple C code transformation, compiler was able to generate software pipelining for the loop which resulted in major speedup.

In addition to what is presented in above passage, some macros are eliminated (if converted) and minor code changes were done, but these changes did not result in significant performance gain. We did not change algorithm itself. Significant problem in this function, when considering performance, was very large inner non-optimized loop with complex conditional expression and because of that compiler was unable to perform software pipelining. Optimization of this function did not bring any changes in algorithm output. The gain in that specific function was ~2.7 time speed up.

Aligning loads and stores in the thigh loop

The problem with implementation of this loop is that compiler did not know anything about alignment of the processed data so the non-aligned loads and stores are used. Initially there were 8 loads and 4 stores (non-aligned) per loop iteration and since they are not aligned, 2 address paths are used per load/store.

In order to reduce number of loads, stores and address paths used in loop iteration, the processed data are aligned to 8, double aligned loads/stores are used and SIMD addition intrinsic is used _dadd2(). After the optimization, there were 2 loads, 1 store and 1 addition per loop iteration and software pipeline improved a lot for the loop. This optimization reduced time of the specific function for around 1.5ms per frame (~15%).

Calculating several results in parallel in a loop

In one time consuming loop the compiler did not find any schedule of the initial implementation of the loop because of the loop carried dependencies. In order to reduce loop carried dependencies, instead of calculating a single result for one loop iteration, implementation is changed to calculate the four results in a loop iteration. Four restrict pointers to input data are used and by doing this loop carried dependencies are reduced four times.

Using vector operations for 16 bits long data types

Briefly, when working with 16 bits long data types of C66x many operations can be done in parallel using SIMD instructions for 2 or more elements. In most cases, both SIMD and single argument instructions have the cycle counts, so usage of these instructions clearly increases throughput. We used _max2(), _min2() and _cmpgt2() intrinsics. These calculate maximum, minimum and greater-than comparison for 2 16 bits long integers respectively.

Inline function expansion

A function called inside a loop, prevents compiler from being able to perform software pipelining and loop optimization. In order to enable software pipelining the function is inserted inside the loop. This action is called inline function expansion, and is very advantageous when performing loop optimization. Several rather small functions are changed to be inline functions. This reduced execution time of function.

There were not any changes in algorithm due to such optimization. Only problem in this function, from the standpoint of optimization, was function call inside loop and because of that compiler was unable to perform software pipelining.

Summary

Reference was a highly optimized C code already running on the target platform – Texas Instruments TDA3x SoC with C66 DSP. The initial (optimized by customer) version of C code required 86 ms per frame. The final (optimized by RT-RK) version requires 28 ms per frame. The most significant improvement have been gain by algorithmic modifications and simplification (many unnecessary calculation steps have been removed).