Introduction

The SpacemiT K1 SoC-featured on boards like the Banana Pi BPI-F3-gives developers access to one of the first mass-market RISC-V platforms with RVV 1.0 support. But RVV alone doesn’t guarantee performance: on a core like SpacemiT X60, the compiler must understand the microarchitecture well enough to make good scheduling decisions and avoid pipeline stalls.

For any compiler (like GCC), effectively targeting X60 requires modeling two distinct execution domains: the dual-issue in-order scalar pipeline and the 256-bit vector unit. In this blog series, we describe how to represent those behaviors in the compiler backend, so generated code better matches the real hardware. This first part focuses on the scalar side: implementing an instruction scheduling model and defining instruction latencies and resource constraints to help the compiler make consistent, cycle-aware scheduling choices on the X60.

Defining SpacemiT X60 Core Specifications and Pipeline Models in RISC-V GCC

In the previous article, we described sifive-7-series microarchitecture. SpacemiT X60 follows the same idea: if we want the compiler to generate optimized code for both the scalar pipeline and the vector unit, the core needs an explicit definition in the RISC-V backend.

A typical definition in riscv-cores.def identifies the core and its supported extensions:

#define RISCV_CORE(CORE_NAME, ARCH, MICRO_ARCH) ... RISCV_CORE( "spacemit-x60", "rv64imafdcv_zba_zbb_zbc_zbs_zicboz_zicond_zbkc_zfh_zvfh_zvkt_zvl256b_sscofpmf_xsmtvdot", "spacemit-x60" )

A typical definition in riscv-cores.def that identifies the tune and its pipeline:

#define RISCV_TUNE(TUNE_NAME, PIPELINE_MODEL, TUNE_INFO) ... RISCV_TUNE( "spacemit-x60", spacemit_x60, spacemit_x60_tune_info )

These definitions point GCC to the specific tuning parameters such as instruction latency, pipeline hazards, and vector costs, located in the machine description files (e.g., gcc/target/riscv/spacemit-x60.md). Without this specific tuning, the compiler defaults to a generic cost model that often fails to utilize the dual-issue capabilities or the full vector width of the hardware.

Features of SpacemiT X60 Cores

The SpacemiT X60 is designed to offer a balance between performance and power efficiency. It powers the SpacemiT K1 SoC, a chip that has recently been adopted in a variety of hardware platforms.

Besides the cited Banana Pi BPI-F3, the X60 architecture is currently used in:

- DeepComputing DC-ROMA Laptop II

- DeepComputing DC-ROMA PAD II

- Milk-V Jupiter

- Sipeed Lichee Pi 3A

- SpacemiT MUSE Book

The table below details the core specifications:

| Feature | Specification |

|---|---|

| Architecture | RISC-V 64-bit (RVA22 Profile) |

| Scalar Pipeline | 8-stage, Dual-issue, In-order |

| Vector Unit | RVV 1.0 with VLEN 256/128-bit and x2 execution width |

| L1 Cache | 32KB Instruction / 32KB Data per core |

| L2 Cache | 1MB (Cluster shared) |

To understand the optimization challenges, we must look at the two main execution domains of the processor:

Scalar Tuning: The Dual-Issue In-Order Pipeline

The SpacemiT X60 uses an 8-stage pipeline that can issue two instructions per cycle. Unlike out-of-order processors (such as the SiFive U74), the X60 executes instructions in the exact sequence they appear in the binary.

- The Challenge: The hardware does not automatically reorder instructions to hide latency. If the code contains adjacent dependent instructions, the pipeline will stall, breaking the dual-issue rate.

- The Solution: Software performance relies heavily on the compiler (or the assembly programmer) to interleave independent instructions, keeping both execution slots.

Spacemit-x60 GCC scalar tuning

In this chapter, we break down the scalar tuning implemented for the SpacemiT X60 in the GCC RISC-V backend. The focus is the in-order, dual-issue scalar pipeline: how we describe its execution resources and timing so GCC can make better instruction scheduling decisions.

Specifically, we cover:

- how the pipeline is represented using a custom automaton and CPU units (ALU0/ALU1, LSU0/LSU1, FP units), and what kinds of structural hazards those resources model;

- how instruction classes are mapped to reservations, including key latencies for loads/stores, integer ALU operations, branches, and long-latency operations such as division that create asymmetric bottlenecks;

- what bypass/forwarding modelling means for an in-order dual-issue core, why it matters for reducing artificial stalls, and where additional tuning can still improve scheduling quality.

Pipeline Automation and CPU Units

The file begins by defining a custom automaton (define_automaton "spacemit_x60") and several CPU units. This establishes the fundamental resources that instructions will contend for:

(define_automaton "spacemit_x60") (define_cpu_unit "spacemit_x60_alu0, spacemit_x60_alu1" "spacemit_x60") (define_cpu_unit "spacemit_x60_lsu0, spacemit_x60_lsu1" "spacemit_x60") (define_cpu_unit "spacemit_x60_vxu0" "spacemit_x60") (define_cpu_unit "spacemit_x60_fpalu" "spacemit_x60") (define_cpu_unit "spacemit_x60_fdivsqrt" "spacemit_x60") (define_reservation "spacemit_x60_lsu" "spacemit_x60_lsu0, spacemit_x60_lsu1") (define_reservation "spacemit_x60_alu" "spacemit_x60_alu0, spacemit_x60_alu1")

ALU0 & ALU1: Two integer arithmetic logic units allow two independents

arithmetic or logical instructions to be issued in the same cycle. These two units together form a pipeline ALU.

LSU0 & LSU1: Two Load/Store units handle memory access. These two units together form a pipeline LSU.

FPALU & FDIVSQRT: Dedicated units for floating-point arithmetic and division/square-root operations.

VX0: Vector unit, which will be reserved for vector instructions.

This granularity allows the scheduler to simulate the state of the processor at every cycle. If the compiler detects two independent integer additions, it knows it can schedule them simultaneously on ALU0 and ALU1. Conversely, if the code demands a complex integer division (which locks ALU0), the scheduler understands that the pipeline faces a bottleneck.

Instruction Reservations and Latency Modeling

The core of this file lies in the define_insn_reservation lines. Each reservation describes how long a given instruction class occupies certain pipeline units. Some noteworthy examples:

(define_insn_reservation "spacemit_x60_load" 5 (and (eq_attr "tune" "spacemit_x60") (eq_attr "type" "load,fpload,atomic") ) "spacemit_x60_lsu" )

A load takes 5 cycles, occupying the LSU pipeline. Reading from memory takes time. By setting this to 5 cycles, we force the compiler to "look ahead." It attempts to insert at least 4 independent instructions right after a load command, so the CPU stays busy while data is fetched from the cache.

(define_insn_reservation "spacemit_x60_store" 3 (and (eq_attr "tune" "spacemit_x60") (eq_attr "type" "store,fpstore") ) "spacemit_x60_lsu" )

A store takes 3 cycles, occupying the LSU pipeline. Writing is faster because the CPU does not wait for a response. However, we still mark it as 3 cycles to prevent the compiler from scheduling a long burst of store commands, which would clog up the memory units and block important reads.

(define_insn_reservation "spacemit_x60_ jump" 1 (and (eq_attr "tune" "spacemit_x60") (eq_attr "type" "branch,jump,call,jalr,ret,trap,sfb_alu") ) "spacemit_x60_alu0" )

(define_insn_reservation spacemit_x60_ idivsi " 20 (and (eq_attr "tune" "spacemit_x60") (eq_attr "type" "idiv") (eq_attr "mode" "DI") ) "spacemit_x60_alu0*20" )

(define_insn_reservation "spacemit_x60_alu" 1 (and (eq_attr "tune" "spacemit_x60") (eq_attr "type" "unknown,const,arith,shift,slt,multi,auipc,nop,logical,\ move,bitmanip,min,max,minu,maxu,clz,ctz,rotate,\ condmove,crypto,mvpair,zicond,cpop" ) ) "spacemit_x60_alu" )

A very important detail is hidden here. The code assigns both Division (idiv) and Branching (jump) to the same specific unit: spacemit_x60_alu0.

This creates a physical bottleneck. A 64-bit division takes 20 cycles to finish, keeping ALU0 completely busy. By defining this in the model, the compiler knows it cannot schedule a branch instruction while a division is in progress. Instead, it will try to fill the parallel ALU1 (accessed via the generic spacemit_x60_alu reservation) with other simple math operations, effectively preventing pipeline stalls. Crucially, this reflects the asymmetric nature of the SpacemiT X60’s execution lanes.

This isn't a design flaw, but a deliberate trade-off. As confirmed by the official SpacemiT X60 architecture docs, the SpacemiT X60 prioritizes 'minimal hardware cost' and efficiency. By keeping the silicon logic simple and offloading hazard management to the GCC 16 machine description, the chip remains compact and power-friendly, while the compiler does the heavy lifting to ensure no speed is left on the table.

(define_insn_reservation spacemit_x60_falu" 4 (and (eq_attr "tune" "spacemit_x60") (eq_attr "type" "fadd,fmul,fmadd") ) "spacemit_x60_fpu" )

Floating-point math runs on its own unit (FPALU) with a standard 4-cycle delay, allowing it to execute in parallel with integer code.

Bypass Mechanisms

At the bottom of the file, define_bypass statements that describe forwarding paths, these are critical for avoiding unnecessary pipeline stalls when instruction results can be delivered directly to subsequent instructions without waiting for register file writes.

Right now, SpacemiT X60 tune doesn’t have a bypass mechanism, but there are arguments for including it:

- Reduction of Effective Latency: Without bypass definitions, the compiler assumes that a result is only available after the "Write-Back" stage. By defining bypasses, the scheduler learns that a result from ALU0 can be used by a subsequent instruction in the very next cycle, effectively reducing the perceived latency of arithmetic operations.

- Minimizing Pipeline Bubbles: In a dual-issue in-order core like the X60, Read-After-Write hazards are performance killers. Including a bypass mechanism allows the GCC scheduler to place dependent instructions closer together, preventing the insertion of unnecessary "bubbles" or NOPs that would otherwise stall both execution lanes.

- Optimization of the Dual-Issue Throughput: Since the SpacemiT X60 relies on filling two lanes (ALU0 and ALU1) to reach its peak IPC, a lack of bypass info often forces the compiler to be overly conservative. Defining bypasses between the two lanes ensures that the second execution port isn't left idle while waiting for a register update that has already been calculated in the first port.

- Hardware Fidelity: Most modern RISC-V cores, including the SpacemiT X60, have internal hardware forwarding logic. If the GCC machine description does not reflect this, the compiler is optimizing a "virtual" version of the chip that is slower than the actual silicon, leading to suboptimal instruction ordering in compute-intensive loops.

- Vector-to-Scalar Performance: Given that the X60 supports vector extensions (V-extension), bypasses are critical when moving data between vector and scalar registers. Proper bypass modeling ensures that scalar instructions waiting on vector reductions or memory loads are scheduled with minimal delay.

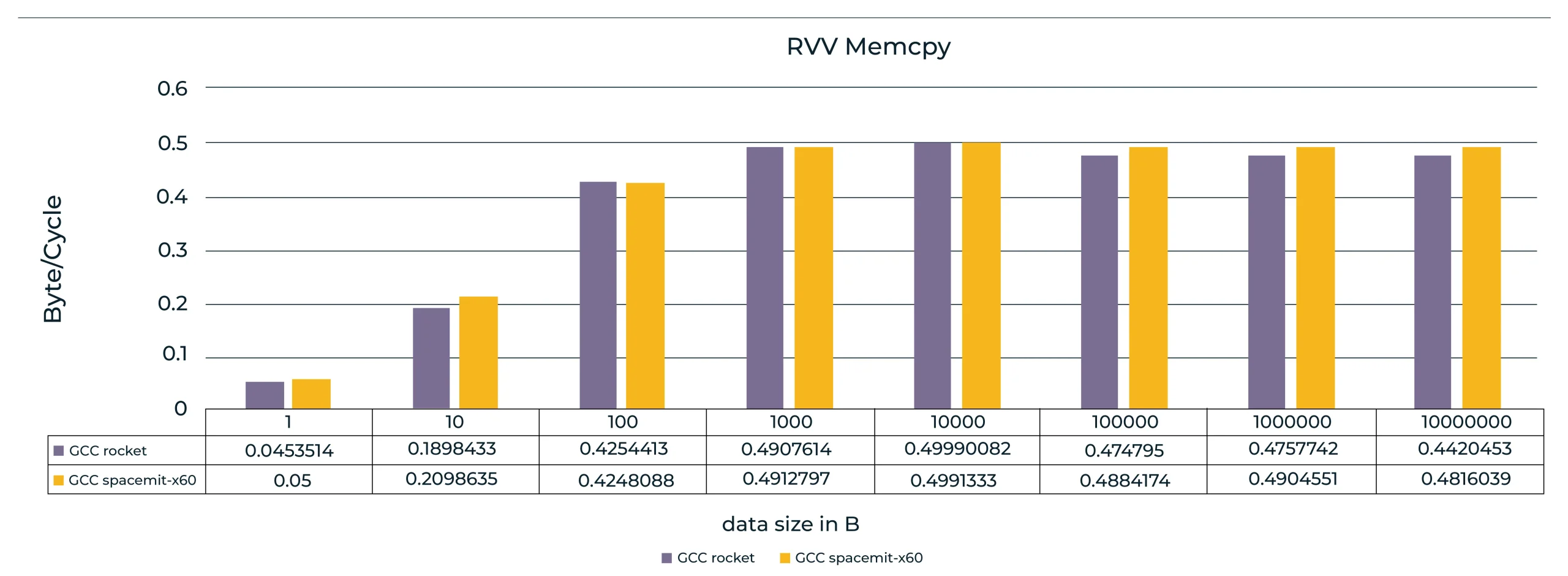

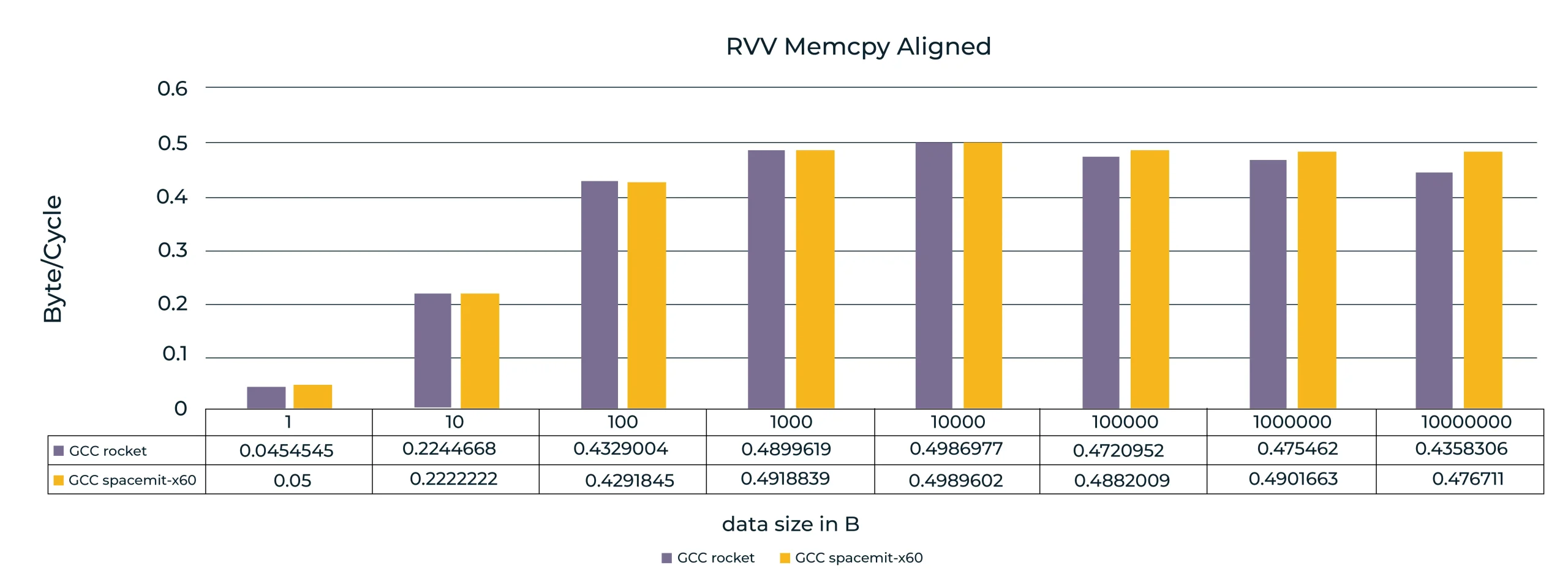

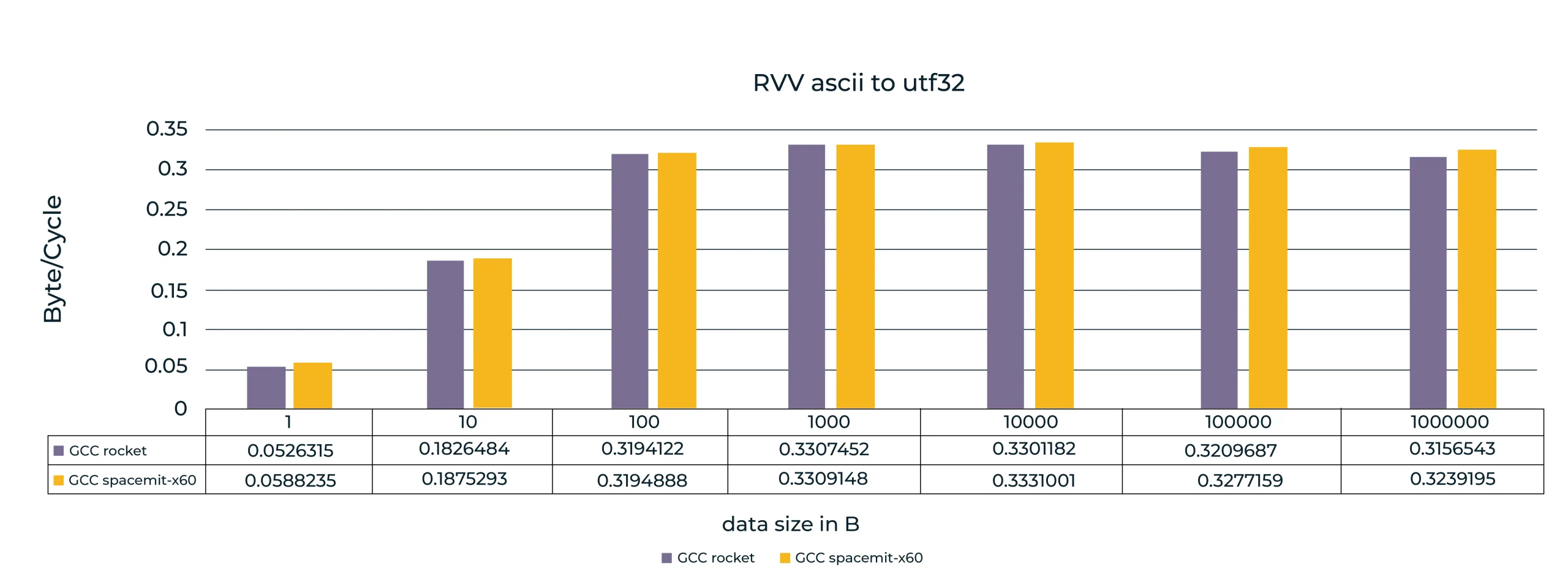

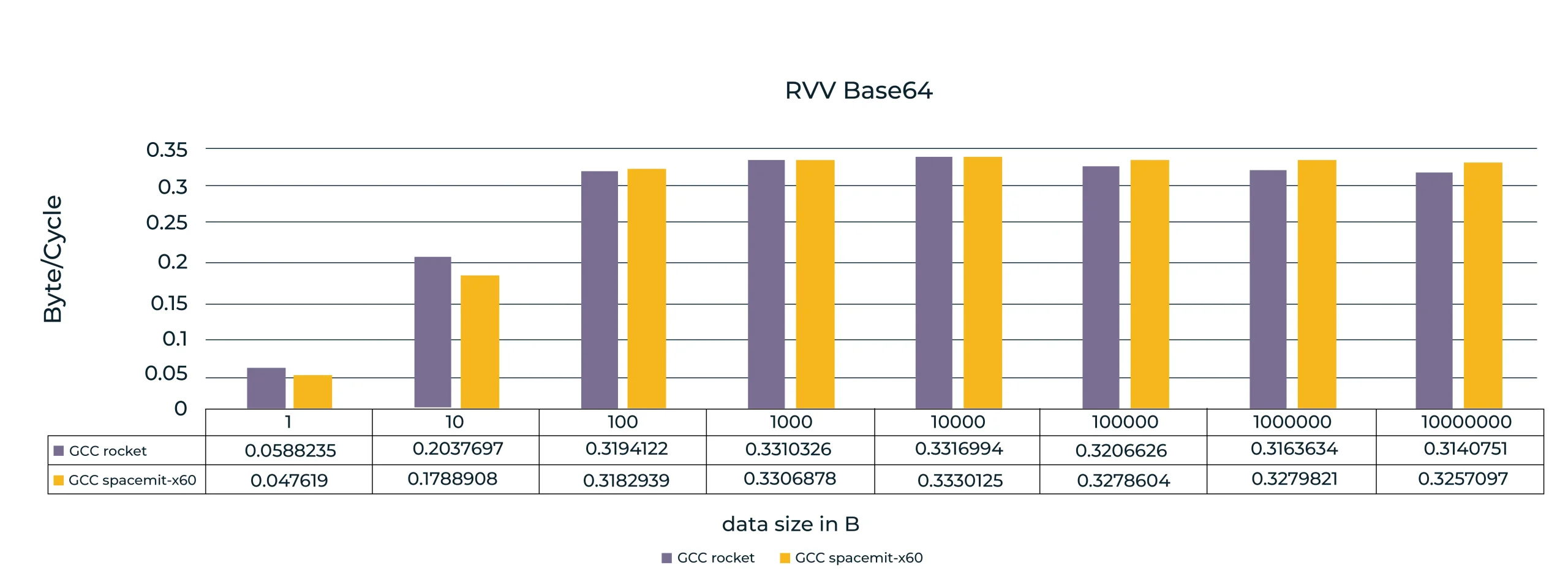

Benchmark results: Impact of scheduler scalar tuning

To validate the effectiveness of the SpacemiT X60 machine description, we conducted comparative benchmarks on a Banana Pi BPI-F3 (SpacemiT K1) development board. To ensure deterministic results and eliminate interference, all benchmarks were executed on a single core.

The performance is evaluated using different metrics across the test suite:

- Memory functions: Throughput measured in Bytes/Cycle (first five benchmarks).

- CoreMark: Performance measured in Iterations/Second.

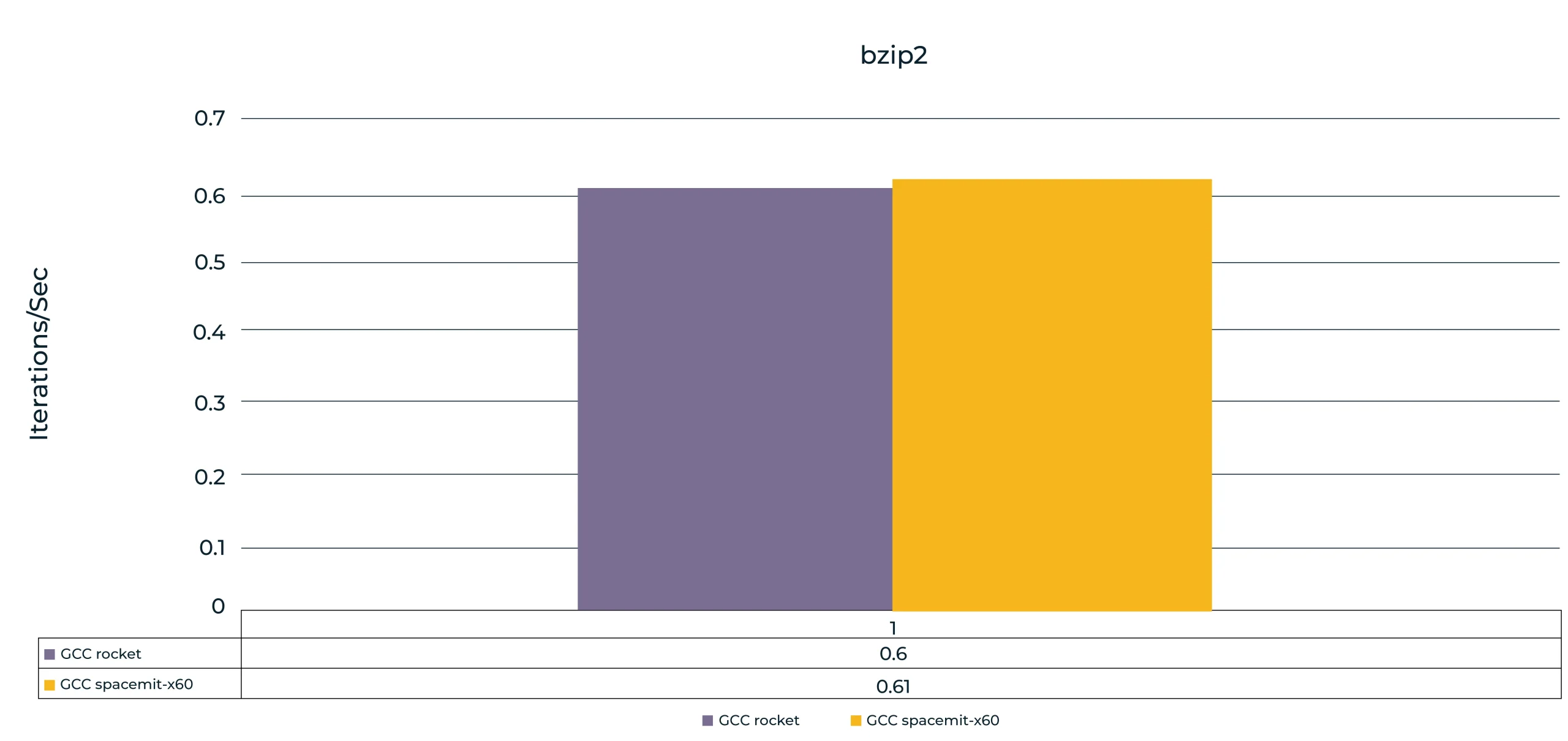

- bzip2: Efficiency measured in Instructions Per Cycle (IPC).

The charts below compare the generic rocket tuning (violet) against the SpacemiT X60 tuning (ocher), demonstrating the impact of accurate instruction scheduling on real-world performance.

Based on RVV scalar benchmarks, we observed performance improvements ranging from 3% to 7%, depending on the test and data size.

Based on the Coremark benchmark, using SpacemiT X60 resulted in approximately a 2% performance improvement (iterations per second) compared to the rocket configuration.

Based on the bzip2 test on 50 MB file, using SpacemiT X60 resulted in an approximately 0.7% performance improvement (instruction/cycle) compared to the rocket configuration.

Conclusion

To achieve peak performance, the compiler must model the target microarchitecture with sufficient accuracy. This is especially important for an in-order core like SpacemiT X60, where instruction throughput depends heavily on compile-time scheduling. Suboptimal ordering directly turns into pipeline bubbles and underutilized issue slots.

In this work, we focused on scalar tuning by defining the pipeline resources, instruction latencies, and key structural hazards in the machine description With an X60-specific scheduling model, GCC can better account for dual-issue constraints and long-latency operations, and schedule independent instructions to reduce stalls. In our measurements, this translated into up to a 7% improvement in scalar performance and a corresponding gain in CoreMark, demonstrating the impact of precise latency/resource modeling on real workloads.

While this first part focused on the scalar pipeline and the foundational tuning of the machine description, the true potential of the SpacemiT X60 lies in its wide 256-bit vector units. In Part II, we will shift our focus to the RISC-V Vector (RVV) 1.0 implementation, exploring how the compiler handles high-bandwidth data processing and the specific challenges of optimizing vector-specific costs and register pressure within the GCC backend.

Dusan Stojkovic

Milan Tripkovic