SpacemiT X60 GCC vector tuning

The previous article, GCC tuning for SpacemiT X60: Building an in-order dual-issue scheduler model (Part I), explored the baseline machine description for the X60’s dual-issue in-order scalar pipeline. It examined the definition of the scheduling automaton and execution resources (ALU/LSU/FP units) and how scalar instruction classes are mapped to reservations and latencies.

Unlike scalar instructions, which have fixed latencies, vector instruction costs are dynamic. Execution time differs significantly based on the Length Multiplier (LMUL) and Selected Element Width (SEW). As a result, a static scheduling model that assumes a fixed cost for all vector operations fails to optimize pipeline throughput effectively.

This article focuses on implementing the vector scheduling model for the Vector Execution Unit (VXU) within GCC. It also introduces dynamic LMUL-based cost scaling and validates the scheduler using benchmarks from the LLVM test-suite and custom stress tests.

CPU Units

As specified in Part I, vector tuning uses only a single vector unit, VXU0, even though the hardware actually has two. Internally, operations are split between two units to act as one wider unit with higher performance. However, there are some operations that can only be issued to one of the two units. For the lack of official documentation, the vector pipeline is modeled as a single unit in the initial implementation.

This simplified single-unit model provides a stable baseline for scheduling, while future versions of the model could include dual-issue support to better match the hardware's actual behavior.

The vector unit is defined in the machine description as:

(define_cpu_unit "spacemit_x60_vxu0" "spacemit_x60")

Instruction Reservations and Latency Modeling

Unlike scalar instructions, vector instruction latencies vary depending on the selected LMUL (Length Multiplier) and SEW (Selected Element Width). This initial model scales latencies only by LMUL, as it is the dominant factor in determining execution cost.

LMUL-based scaling is implemented directly in the scheduler through the riscv_sched_adjust_cost hook. The hook reads the instruction’s vlmul attribute (as a vlmul_type) and scales the base cost according to the effective LMUL value (e.g., ×1, ×2, ×4, ×8, ×0.5, ×0.25, or ×0.125). The adjustment is enabled through the -madjust-lmul-cost flag, which is implicitly activated when compiling with -mtune=spacemit-x60. This keeps the machine description compact while allowing instruction latency to reflect the effective vector register grouping during scheduling.

The latencies and resource occupancy values in this cost model are based on llvm-mca performance simulations on the existing SpacemiT X60 LLVM scheduling model and validated using RVV instruction-level microbenchmarks from the camel-cdr/rvv-bench repository on a Banana Pi BPI-F3 board.

The pipeline occupancy (the reservation cycles within the DFA) is intentionally clamped to a maximum of 7 cycles. A typical vector instruction reservation in the machine description is defined as:

(define_insn_reservation "spacemit_x60_vec_div" 12 (and (eq_attr "tune" "spacemit_x60") (eq_attr "type" "vidiv")) "spacemit_x60_vxu0*7")

As noted by the GCC maintainers, capping the reservation at 7 cycles prevents a "DFA blowup," which would otherwise lead to significantly increased Because it is extremely difficult in practice for the scheduler to find enough independent instructions to cover latencies beyond a few cycles, this clamp maintains model efficiency with little to no impact on overall optimization.

Performance Comparison: Dynamic LMUL Scaling vs. Fixed Latency Scheduling

Since LMUL determines the total amount of vector state processed, execution cost and latency must scale accordingly. Modeling instruction latency as a static value (e.g., assuming LMUL=1 for all operations) is often inefficient. In real-world benchmarks that utilize a wide variety of LMULs, static modeling fails to provide the compiler scheduler with accurate cost information.

The stress_vcompress_heavy benchmark represents one of several stress tests used to evaluate the performance impact of replacing a fixed-latency baseline with dynamic LMUL-based cost scaling implemented through the scheduler hook. By adjusting instruction cost according to the effective LMUL at scheduling time, the compiler can make more accurate scheduling decisions for wider vector register groupings.

Benchmark Implementation: stress test

int32_t stress_vcompress_heavy(const int32_t *src, size_t len) { size_t i = 0; vint32m1_t final_acc = __riscv_vmv_v_x_i32m1(0, __riscv_vsetvlmax_e32m1()); while (i < len) { size_t vl1 = __riscv_vsetvl_e32m1(len - i); vint32m1_t v1 = __riscv_vle32_v_i32m1(&src[i], vl1); vbool32_t m1_1 = __riscv_vmslt_vx_i32m1_b32(v1, 500, vl1); vbool32_t m1_2 = __riscv_vmsgt_vx_i32m1_b32(v1, 200, vl1); v1 = __riscv_vcompress_vm_i32m1(v1, m1_1, vl1); v1 = __riscv_vcompress_vm_i32m1(v1, m1_2, vl1); v1 = __riscv_vcompress_vm_i32m1(v1, m1_1, vl1); v1 = __riscv_vcompress_vm_i32m1(v1, m1_2, vl1); final_acc = __riscv_vadd_vv_i32m1(final_acc, v1, vl1); size_t vl2 = __riscv_vsetvl_e32m2(len - i); vint32m2_t v2 = __riscv_vle32_v_i32m2(&src[i], vl2); vbool16_t m2_1 = __riscv_vmsne_vx_i32m2_b16(v2, 0, vl2); vbool16_t m2_2 = __riscv_vmslt_vx_i32m2_b16(v2, 1000, vl2); v2 = __riscv_vcompress_vm_i32m2(v2, m2_1, vl2); v2 = __riscv_vcompress_vm_i32m2(v2, m2_2, vl2); final_acc = __riscv_vadd_vv_i32m1(final_acc, __riscv_vlmul_trunc_v_i32m2_i32m1(v2), vl1); size_t vl4 = __riscv_vsetvl_e32m4(len - i); vint32m4_t v4 = __riscv_vle32_v_i32m4(&src[i], vl4); vbool8_t m4_1 = __riscv_vmsgt_vx_i32m4_b8(v4, 100, vl4); vbool8_t m4_2 = __riscv_vmslt_vx_i32m4_b8(v4, 1400, vl4); v4 = __riscv_vcompress_vm_i32m4(v4, m4_1, vl4); v4 = __riscv_vcompress_vm_i32m4(v4, m4_2, vl4); final_acc = __riscv_vadd_vv_i32m1(final_acc, __riscv_vlmul_trunc_v_i32m4_i32m1(v4), vl1); size_t vl8 = __riscv_vsetvl_e32m8(len - i); vint32m8_t v8 = __riscv_vle32_v_i32m8(&src[i], vl8); vbool4_t m8_1 = __riscv_vmsne_vx_i32m8_b4(v8, 5, vl8); vbool4_t m8_2 = __riscv_vmsgt_vx_i32m8_b4(v8, 10, vl8); v8 = __riscv_vcompress_vm_i32m8(v8, m8_1, vl8); v8 = __riscv_vcompress_vm_i32m8(v8, m8_2, vl8); final_acc = __riscv_vadd_vv_i32m1(final_acc, __riscv_vlmul_trunc_v_i32m8_i32m1(v8), vl1); i += vl8; } vint32m1_t red = __riscv_vredsum_vs_i32m1_i32m1(final_acc,__riscv_vmv_v_x_i32m1(0, 1), 1); return __riscv_vmv_x_s_i32m1_i32(red); }

The stress_vcompress_heavy function evaluates compiler scheduling efficiency by stressing the vector pipeline with a high density of vcompress operations. The benchmark operates on a 64-byte aligned 4,096-element array and executes for 5,000 iterations. The result is accumulated via vector reduction and stored in a volatile global sink to prevent compiler dead-code elimination, ensuring a consistent and valid hardware stress test.

By interleaving operations across LMUL=1, 2, 4, and 8, the benchmark exposes performance bottlenecks present in fixed-latency models. Because higher LMUL operations occupy execution units for significantly more cycles, an LMUL-aware scheduler is required to accurately predict register readiness.

Performance Results

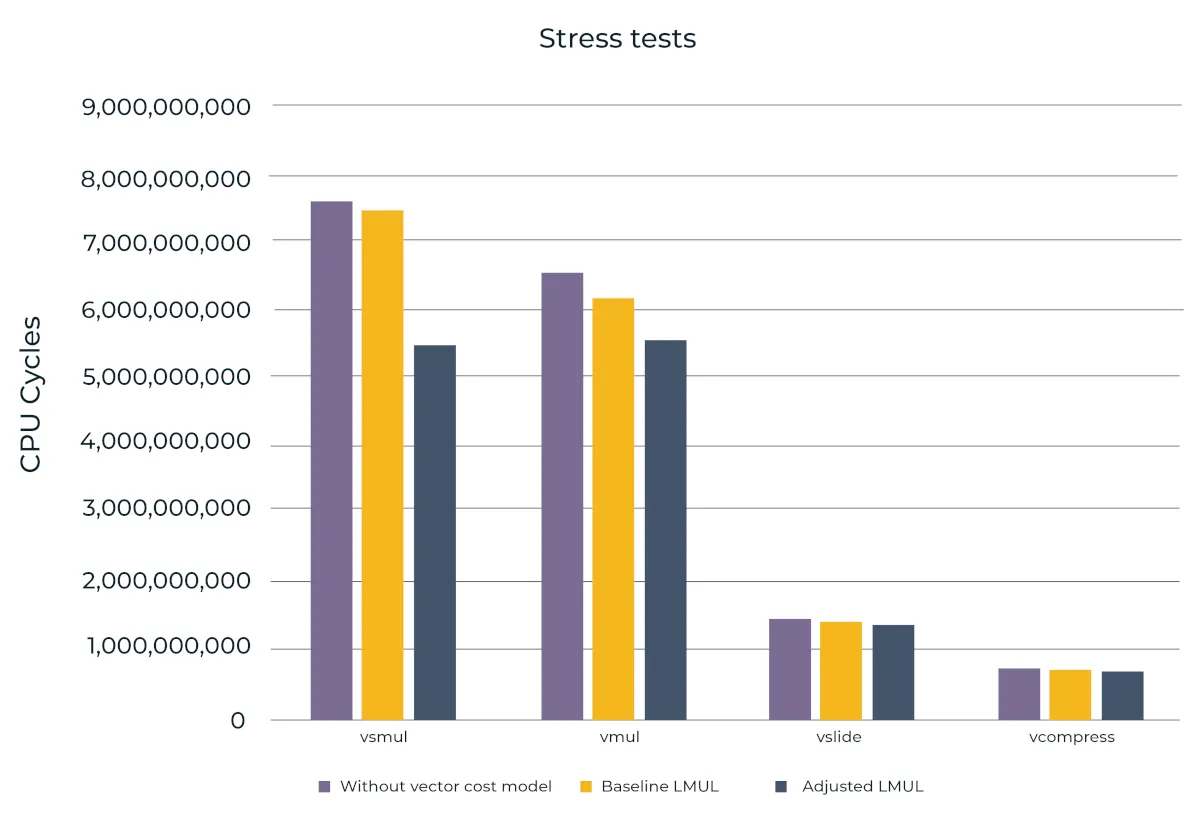

The following graph illustrates the performance impact across several stress tests when replacing a fixed-latency model (assuming LMUL=1) with dynamic LMUL-based cost scaling implemented in the scheduler, while also comparing against a configuration without vector cost modeling.

Figure 1. CPU cycle counts measured during vector stress tests

Performance was measured on a Banana Pi BPI-F3 board using a single RISC-V core. Metrics were collected with perf stat and averaged over 20 iterations to ensure statistical consistency. Across all stress tests, the adjusted LMUL model reduces total cycle count by 7.7% to 26.3% relative to the baseline fixed-LMUL cost model, and by 5.2% to 27.2% compared to a configuration without vector cost modeling, confirming the effectiveness of dynamic LMUL-based cost scaling in the scheduler.

Benchmark results: Impact of Vector Tuning

To evaluate the impact of the vector cost model, performance tests were done on benchmarks from the LLVM test-suite (MultiSource/Benchmarks). To ensure consistency and minimize noise, all benchmarks were executed using the following command:

taskset -c 0 perf stat -r 100 ./[benchmark]

By pinning the process to a single core and averaging the results over 100 runs, we ensured consistent measurements of CPU cycles. The results across selected benchmarks show a performance improvement of 8% and 11% in execution cycles. The instruction counts remain effectively unchanged (<0.1%), confirming that the performance gains are driven by better instruction scheduling and improved pipeline utilization rather than a reduction in instructions.

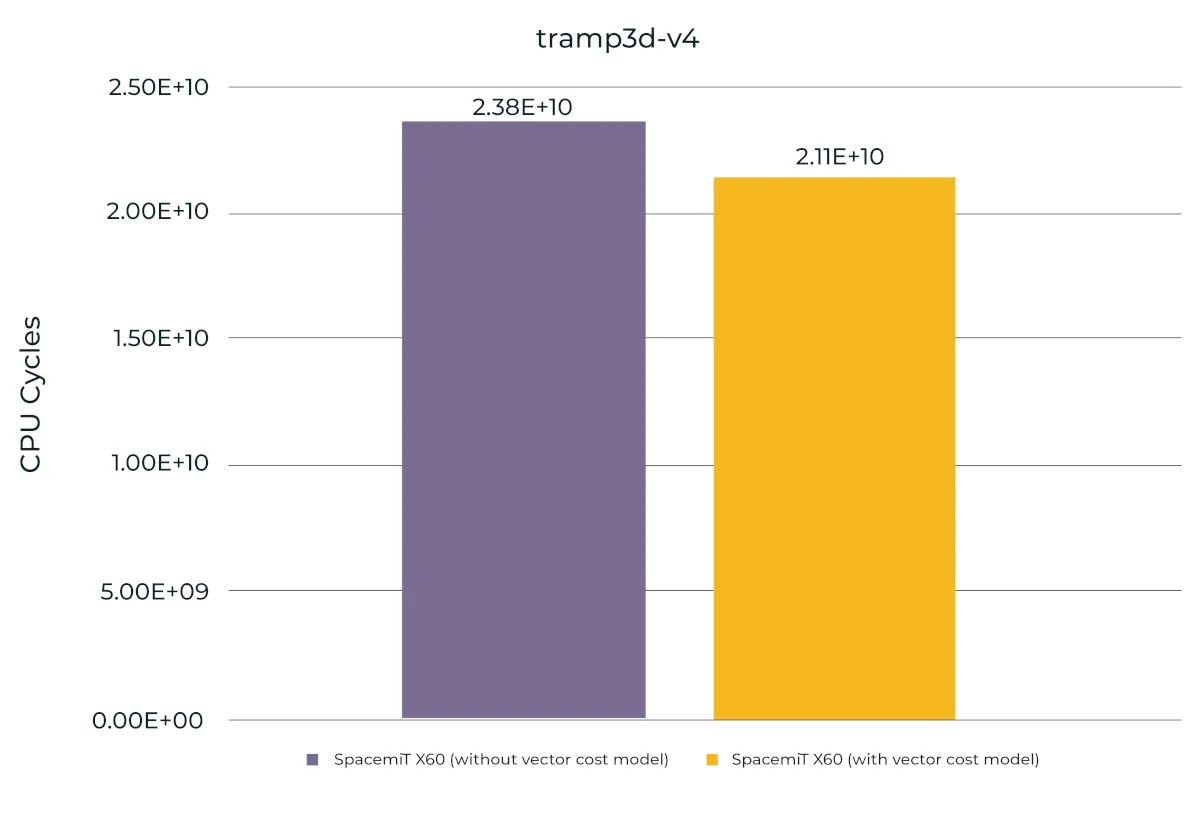

tramp3d-v4

tramp3d-v4 represents complex, template-heavy C++ applications built on the FreePOOMA library. To test the cost model under high-load conditions, the following parameters were used: --cartvis 1.0 0.0, --rhomin 1e-8, and -n 20. These settings significantly increase floating-point intensity and dependency pressure.

With the vector cost model, CPU cycles for this workload dropped from 2.38 × 10¹⁰ to 2.11 × 10¹⁰, showing an 11.41% improvement. This confirms that the model provides a more effective instruction schedule even in scenarios with high computational density and complex data dependencies where the scheduler is under maximum stress.

Figure 2. CPU cycle counts for the tramp3d-v4 benchmark with and without the vector cost model

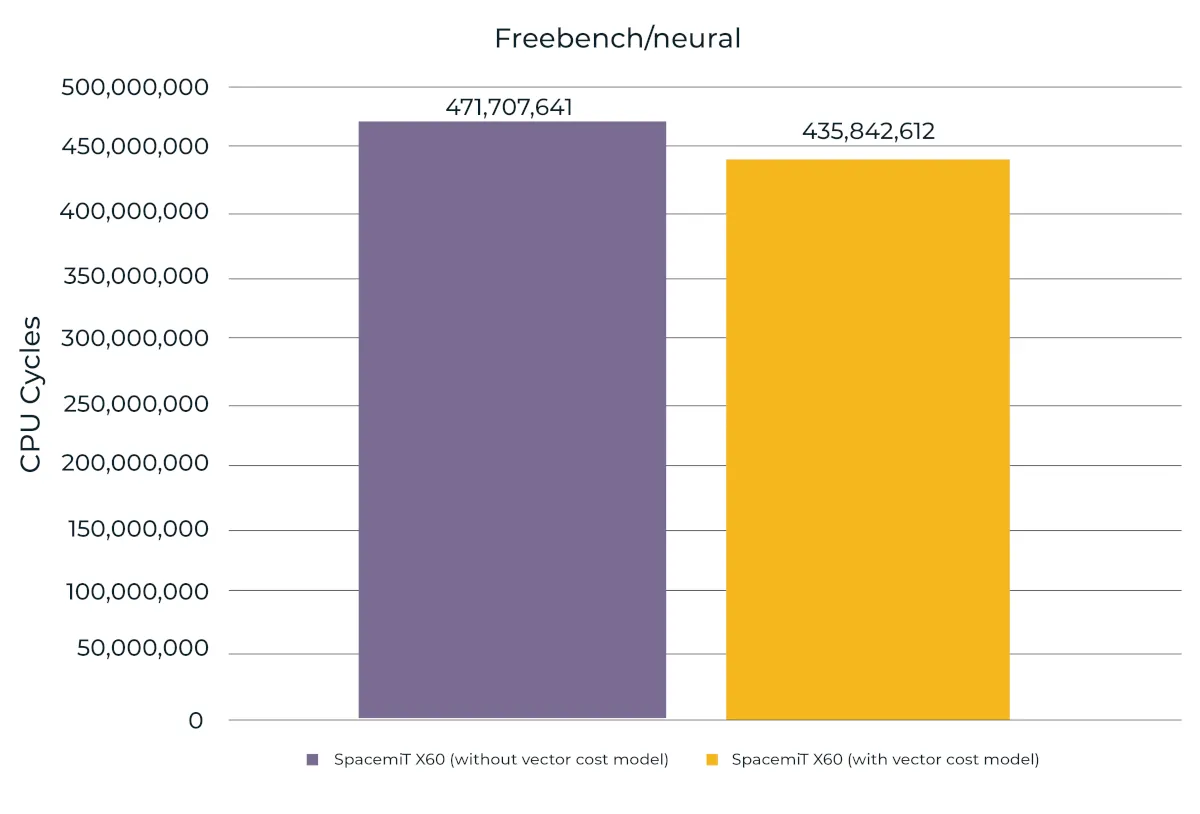

neural

The neural benchmark from the FreeBench suite was included to evaluate the model’s impact on neural network processing. This workload relies heavily on intensive matrix and vector-based arithmetic, which are core components of activation functions and weight calculations.

Implementation of the vector cost model resulted in an 8.23% performance increase. This confirms that accurate latency modeling enables the compiler to better schedule instructions within the computational kernels, leading to higher execution efficiency on the SpacemiT X60 core.

Figure 3. CPU cycle counts for the FreeBench neural benchmark with and without the vector cost model

Conclusion

To fully exploit RVV on SpacemiT X60, the compiler scheduler must account for the fact that vector instruction latency scales with register grouping. On an in-order, dual-issue core, any mismatch between the model and the real hardware shows up immediately as wasted issue slots and pipeline bubbles.

In this implementation, vector cost modeling was introduced into the RISC-V backend through dynamic LMUL-based scaling using the riscv_sched_adjust_cost hook. Instead of encoding separate reservations for each LMUL configuration in the machine description, the base instruction cost is adjusted at scheduling time according to the instruction’s effective LMUL. When -mtune=spacemit-x60 is selected, the -madjust-lmul-cost flag is enabled automatically, ensuring that LMUL scaling is applied for this microarchitecture. This approach keeps the machine description compact while allowing the scheduler to better approximate the execution cost of wide vector register groupings. The result is improved scheduling behavior, minimized pipeline stalls, and improved performance in heavily vectorized applications.

This implementation represents an initial patch designed to provide a stable baseline. While the current model treats the vector unit as a single resource (VXU0), the hardware technically supports dual-issue vector operations. Future work could be done to refine the pipeline description and expose these dual-issue capabilities to further improve scheduling accuracy.

The full patch has been submitted to the GCC mailing list and is available for review here.

Dusan Stojkovic

Nikola Ratkovac